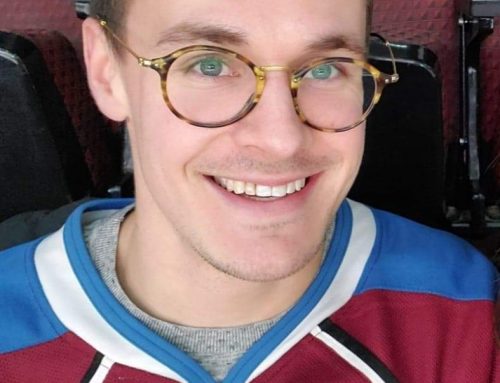

Bartek Chomanski is in his second year as a postdoc at the Rotman Institute, on a fellowship jointly funded with the Rotman Institute of Philosophy, the Faculty of Science, and the Faculty of Engineering. He has research interests in philosophy of mind and the ethics of emerging technologies, especially AI and robotics. He recently completed a virtual interview with Rotman Associate Director, Eric Desjardins.

Can you tell us a little about your educational background and what drove you to become a philosopher?

I completed my undergraduate degree in general humanities, but even then I found philosophy classes to be among the most interesting and rewarding. In particular, I liked the fact that the philosophical style of thinking could be fruitfully applied to so many different topics, from politics to consciousness. I continued my education with a Masters and a PhD in philosophy, focusing on philosophy of mind and applied ethics.

What specific project(s) are you working on at the moment?

There are a few different projects I’m pursuing now. One has to do with the question of responsibility for autonomous artificial agents. The more autonomous AI-powered machines become, the more difficult it is to clearly assign liability for what they do — after all, it seems nonsensical to hold the machines themselves responsible. But then, who else should it be? Those who use the machines, those who design them, or perhaps no one at all? My second project is devoted to examining the proposals to regulate multinational companies using AI algorithms in delivering their products, from Amazon to Twitter. I am especially interested in examining both the normative case for regulation (e.g. what good moral reasons are there, if any, to regulate these companies in the first place, and what sorts of regulations are morally best justified) as well as the empirical assumptions about its effectiveness. These projects focus on technologies that are currently being used, or can realistically be expected to hit the markets in the near future. I also have an interest in the ethics of very speculative AI, like conscious, human-like robots.

Could you tell us briefly about the two courses you’re co-teaching this year?

The first is “Ethical and Societal Implications of AI”, which is a graduate course in the Department of Philosophy, also open to students in Collaborative Specialization in Artificial Intelligence and Masters of Data Analytics Specialty in Artificial Intelligence.

The course covers some of the major themes in ethics of AI, robotics, and automation, concerning both present-day (algorithmic bias and opacity; trust in AI; deepfakes; AI and social media) and near- and long-term future uses of these technologies (autonomous vehicles and weapons, machine consciousness and AI personhood, and the technological singularity). We are examining what sorts of social impact they are likely to have and evaluate this impact with the tools of ethical theory, applied ethics, and a smattering of political philosophy. The readings feature a variety of perspectives from a range of professionals: philosophers, AI researchers, social scientists, lawyers, and tech journalists, among others.

I’ve also developed and am co-teaching a Computer Science course cross-listed with Electrical and Computer Engineering, and Statistics. The course is called “Artificial Intelligence and Society: Ethical and Legal Challenges” and is team-taught with people from the Western Law School and the Department Computer Science. In addition to covering more academic topics, it will also include guest speakers from the City of London and Royal Bank of Canada. The aim is to introduce students to selected ethical and legal frameworks relevant to artificial intelligence, data science and big data in the context of their societal, economic and political applications in contemporary society.

If there is one thing that you really want the students to remember from taking your courses, what would it be?

That philosophical reflection about AI and similar technologies allows us to better appreciate both the challenges and the opportunities flowing from their deployment, and makes us more alert to the inevitable trade-offfs between different values that using these technologies will necessitate, such as the question whether we should use opaque, ‘black-box’ algorithms to improve predictive accuracy in some crucial domain like medicine, thus sacrificing explainability for better health outcomes.

When you are not busy being a philosopher, what do you like to do?

I really enjoy running and biking — London has some very scenic trails! — and playing fantasy soccer (though the way this season is going for me, I’m not sure “enjoy” is the right word).